Heterogeneous System Architecture

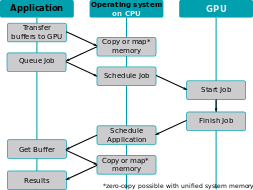

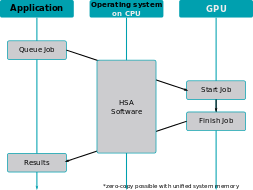

Heterogeneous System Architecture (HSA) is a cross-vendor set of specifications that allow for the integration of central processing units and graphics processors on the same bus, with shared memory and tasks.[1] The HSA is being developed by the HSA Foundation, which includes (among many others) AMD and ARM. The platform's stated aim is to reduce communication latency between CPUs, GPUs and other compute devices, and make these various devices more compatible from a programmer's perspective,[2]:3[3] relieving the programmer of the task of planning the moving of data between devices' disjoint memories (as must currently be done with OpenCL or CUDA).[4]

CUDA and OpenCL as well as most other fairly advanced programming languages can use HSA to increase their execution performance.[5] Heterogeneous computing is widely used in system-on-chip devices such as tablets, smartphones, other mobile devices, and video game consoles.[6] HSA allows programs to use the graphics processor for floating point calculations without separate memory or scheduling.[7]

Rationale

The rationale behind HSA is to ease the burden on programmers when offloading calculations to the GPU. Originally driven solely by AMD and called the FSA, the idea was extended to encompass processing units other than GPUs, such as other manufacturers' DSPs, as well.

Modern GPUs are very well suited to perform single instruction, multiple data (SIMD) and single instruction, multiple threads (SIMT), while modern CPUs are still being optimized for branching. etc.

Overview

Originally introduced by embedded systems such as the Cell Broadband Engine, sharing system memory directly between multiple system actors makes heterogeneous computing more mainstream. Heterogeneous computing itself refers to systems that contain multiple processing units – central processing units (CPUs), graphics processing units (GPUs), digital signal processors (DSPs), or any type of application-specific integrated circuits (ASICs). The system architecture allows any accelerator, for instance a graphics processor, to operate at the same processing level as the system's CPU.

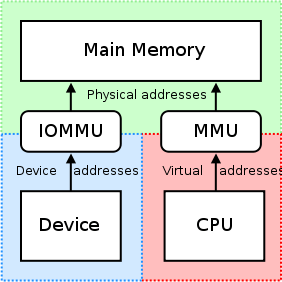

Among its main features, HSA defines a unified virtual address space for compute devices: where GPUs traditionally have their own memory, separate from the main (CPU) memory, HSA requires these devices to share page tables so that devices can exchange data by sharing pointers. This is to be supported by custom memory management units.[2]:6–7 To render interoperability possible and also to ease various aspects of programming, HSA is intended to be ISA-agnostic for both CPUs and accelerators, and to support high-level programming languages.

So far, the HSA specifications cover:

HSA Intermediate Layer

HSA Intermediate Layer (HSAIL), a virtual instruction set for parallel programs

- similar to LLVM Intermediate Representation and SPIR (used by OpenCL and Vulkan)

- finalized to a specific instruction set by a JIT compiler

- make late decisions on which core(s) should run a task

- explicitly parallel

- supports exceptions, virtual functions and other high-level features

- debugging support

HSA memory model

- compatible with C++11, OpenCL, Java and .NET memory models

- relaxed consistency

- designed to support both managed languages (e.g. Java) and unmanaged languages (e.g. C)

- will make it much easier to develop 3rd-party compilers for a wide range of heterogeneous products programmed in Fortran, C++, C++ AMP, Java, et al.

HSA dispatcher and run-time

- designed to enable heterogeneous task queueing: a work queue per core, distribution of work into queues, load balancing by work stealing

- any core can schedule work for any other, including itself

- significant reduction of overhead of scheduling work for a core

Mobile devices are one of the HSA's application areas, in which it yields improved power efficiency.[6]

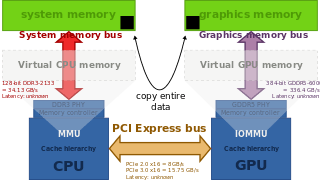

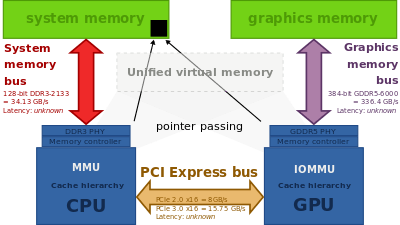

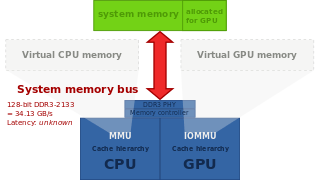

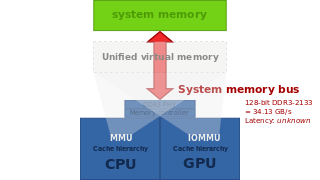

Block diagrams

The block diagrams below provide high-level illustrations of how HSA operates and how it compares to traditional architectures.

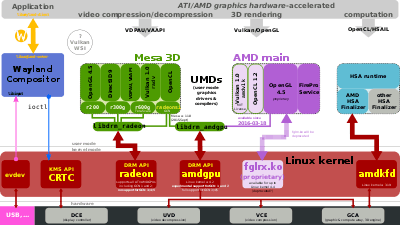

Software support

Some of the HSA-specific features implemented in the hardware need to be supported by the operating system kernel and specific device drivers. For example, support for AMD Radeon and AMD FirePro graphics cards, and APUs based on Graphics Core Next (GCN), was merged into version 3.19 of the Linux kernel mainline, released on February 8, 2015.[10] Programs do not interact directly with amdkfd, but queue their jobs utilizing the HSA runtime.[11] This very first implementation, known as amdkfd, focuses on "Kaveri" or "Berlin" APUs and works alongside the existing Radeon kernel graphics driver.

Additionally, amdkfd supports heterogeneous queuing (HQ), which aims to simplify the distribution of computational jobs among multiple CPUs and GPUs from the programmer's perspective. Support for heterogeneous memory management (HMM), suited only for graphics hardware featuring version 2 of the AMD's IOMMU, was accepted into the Linux kernel mainline version 4.14.[12]

Integrated support for HSA platforms has been announced for the "Sumatra" release of OpenJDK, due in 2015.[13]

AMD APP SDK is AMD's proprietary software development kit targeting parallel computing, available for Microsoft Windows and Linux. Bolt is a C++ template library optimized for heterogeneous computing.[14]

GPUOpen comprehends a couple of other software tools related to HSA. CodeXL version 2.0 includes an HSA profiler.[15]

Hardware support

AMD

As of February 2015, only AMD's "Kaveri" A-series APUs (cf. "Kaveri" desktop processors and "Kaveri" mobile processors) and Sony's PlayStation 4 allowed the integrated GPU to access memory via version 2 of the AMD's IOMMU. Earlier APUs (Trinity and Richland) included the version 2 IOMMU functionality, but only for use by an external GPU connected via PCI Express.

Post-2015 Carrizo and Bristol Ridge APUs also include the version 2 IOMMU functionality for the integrated GPU.

| Brand | Llano | Trinity | Richland | Kaveri | Carrizo | Bristol Ridge | Raven Ridge | Desna, Ontario, Zacate | Kabini, Temash | Beema, Mullins | Carrizo-L | Stoney Ridge | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Platform | Desktop, Mobile | Ultra-mobile | |||||||||||

| Released | Aug 2011 | Oct 2012 | Jun 2013 | Jan 2014 | Jun 2015 | Jun 2016 | Oct 2017 | Jan 2011 | May 2013 | Q2 2014 | May 2015 | June 2016 | |

| Fab. (nm) | GlobalFoundries 32 SOI | GlobalFoundries 28 SHP | GlobalFoundries 14LPP (FinFET) | TSMC 40 | 28 | ||||||||

| Die size (mm2) | 228 | 246 | 245 | 244.62 | 250.04 | 210[16] | 75 (+ 28 FCH) | ~107 | TBA | 125 | |||

| Socket | FM1, FS1 | FM2, FS1+, FP2 | FM2+, FP3 | FM2+[lower-alpha 1], FP4 | AM4, FP4 | AM4, FP5 | FT1 | AM1, FT3 | FT3b | FP4 | FP4 | ||

| CPU micro-architecture | AMD 10h | Piledriver | Steamroller | Excavator | Zen | Bobcat | Jaguar | Puma | Puma+[17] | Excavator | |||

| Memory support | DDR3-1866 DDR3-1600 DDR3-1333 | DDR3-2133 DDR3-1866 DDR3-1600 DDR3-1333 | DDR4-2933 DDR4-2667 DDR4-2400 DDR4-2133 | DDR3L-1333 DDR3L-1066 | DDR3L-1866 DDR3L-1600 DDR3L-1333 DDR3L-1066 | DDR3L-1866 DDR3L-1600 DDR3L-1333 | Up to DDR4-2133 | ||||||

| 3D engine[lower-alpha 2] | TeraScale (VLIW5) | TeraScale (VLIW4) | GCN 2nd Gen (Mantle, HSA) | GCN 3rd Gen (Mantle, HSA) | GCN 5th Gen[18] (Mantle, HSA) | TeraScale (VLIW5) | GCN 2nd Gen | GCN 3rd Gen[18] | |||||

| Up to 400:20:8 | Up to 384:24:6 | Up to 512:32:8 | Up to 704:44:16[19] | 80:8:4 | 128:8:4 | Up to 192:?:? | |||||||

| IOMMUv1 | IOMMUv2 | IOMMUv1[20] | TBA | TBA | |||||||||

| Video Decoder ASIC | UVD 3.0 | UVD 4.2 | UVD 6.0 | VCN 1.0[21] | UVD 3.0 | UVD 4.0 | UVD 4.2 | UVD 6.0 | UVD 6.3 | ||||

| Video Encoding ASIC | N/A | VCE 1.0 | VCE 2.0 | VCE 3.1 | N/A | VCE 2.0 | VCE 3.1 | ||||||

| GPU power saving | PowerPlay | PowerTune | N/A | PowerTune[22] | |||||||||

| Max. displays[lower-alpha 3] | 2–3 | 2–4 | 2–4 | 3 | 4 | TBA | 2 | TBA | TBA | ||||

| TrueAudio | N/A | N/A[20] | TBA | ||||||||||

| FreeSync | N/A | 1 2 | N/A | TBA | |||||||||

| HDCP[lower-alpha 4] | ? | 1.4 | 1.4 2.2 | ? | 1.4 | ||||||||

| PlayReady[lower-alpha 4] | ? | 3.0 (upcoming) | ? | ||||||||||

/drm/radeon[lower-alpha 5][25][26] |

N/A | N/A | |||||||||||

/drm/amdgpu[lower-alpha 5][27] |

N/A | N/A | |||||||||||

- ↑ No APU models. Athlon X4 845 only.

- ↑ Unified shaders : texture mapping units : render output units

- ↑ To feed more than two displays, the additional panels must have native DisplayPort support.[23] Alternatively active DisplayPort-to-DVI/HDMI/VGA adapters can be employed.

- 1 2 To play protected video content, it also requires card, operating system, driver, and application support. A compatible HDCP display is also needed for this. HDCP is mandatory for the output of certain audio formats, placing additional constraints on the multimedia setup.

- 1 2 DRM (Direct Rendering Manager) is a component of the Linux kernel. Support in this table refers to the most current version.

ARM

ARM's Bifrost microarchitecture, as implemented in the Mali-G71,[29] is fully compliant with the HSA 1.1 hardware specifications. As of June 2016, ARM has not announced software support that would use this hardware feature.

See also

References

- ↑ Tarun Iyer (30 April 2013). "AMD Unveils its Heterogeneous Uniform Memory Access (hUMA) Technology". Tom's Hardware.

- 1 2 George Kyriazis (30 August 2012). Heterogeneous System Architecture: A Technical Review (PDF) (Report). AMD.

- ↑ "What is Heterogeneous System Architecture (HSA)?". AMD. Retrieved 23 May 2014.

- ↑ Joel Hruska (2013-08-26). "Setting HSAIL: AMD explains the future of CPU/GPU cooperation". ExtremeTech. Ziff Davis.

- ↑ Linaro. "LCE13: Heterogeneous System Architecture (HSA) on ARM". slideshare.net.

- 1 2 "Heterogeneous System Architecture: Purpose and Outlook". gpuscience.com. 2012-11-09. Archived from the original on 2014-02-01. Retrieved 2014-05-24.

- ↑ "Heterogeneous system architecture: Multicore image processing using a mix of CPU and GPU elements". Embedded Computing Design. Retrieved 23 May 2014.

- ↑ "Kaveri microarchitecture". SemiAccurate. 2014-01-15.

- ↑ Michael Larabel (July 21, 2014). "AMDKFD Driver Still Evolving For Open-Source HSA On Linux". Phoronix. Retrieved January 21, 2015.

- 1 2 "Linux kernel 3.19, Section 1.3. HSA driver for AMD GPU devices". kernelnewbies.org. February 8, 2015. Retrieved February 12, 2015.

- ↑ "HSA-Runtime-Reference-Source/README.md at master". github.com. November 14, 2014. Retrieved February 12, 2015.

- ↑ "Linux Kernel 4.14 Announced with Secure Memory Encryption and More". 13 November 2017.

- ↑ Alex Woodie (26 August 2013). "HSA Foundation Aims to Boost Java's GPU Prowess". HPCwire.

- ↑ "Bolt on github".

- ↑ AMD GPUOpen (2016-04-19). "CodeXL 2.0 includes HSA profiler".

- ↑ "The Mobile CPU Comparison Guide Rev. 13.0 Page 5 : AMD Mobile CPU Full List". TechARP.com. Retrieved 13 December 2017.

- ↑ "AMD Mobile "Carrizo" Family of APUs Designed to Deliver Significant Leap in Performance, Energy Efficiency in 2015" (Press release). 2014-11-20. Retrieved 2015-02-16.

- 1 2 "AMD VEGA10 and VEGA11 GPUs spotted in OpenCL driver". VideoCardz.com. Retrieved 6 June 2017.

- ↑ Cutress, Ian (1 February 2018). "Zen Cores and Vega: Ryzen APUs for AM4 - AMD Tech Day at CES: 2018 Roadmap Revealed, with Ryzen APUs, Zen+ on 12nm, Vega on 7nm". Anandtech. Retrieved 7 February 2018.

- 1 2 Thomas De Maesschalck (2013-11-14). "AMD teases Mullins and Beema tablet/convertibles APU". Retrieved 2015-02-24.

- ↑ Larabel, Michael (17 November 2017). "Radeon VCN Encode Support Lands In Mesa 17.4 Git". Phoronix. Retrieved 20 November 2017.

- ↑ Tony Chen; Jason Greaves, "AMD's Graphics Core Next (GCN) Architecture" (PDF), AMD, retrieved 2016-08-13

- ↑ "How do I connect three or More Monitors to an AMD Radeon™ HD 5000, HD 6000, and HD 7000 Series Graphics Card?". AMD. Retrieved 2014-12-08.

- ↑ "A technical look at AMD's Kaveri architecture". Semi Accurate. Retrieved 6 July 2014.

- ↑ Airlie, David (2009-11-26). "DisplayPort supported by KMS driver mainlined into Linux kernel 2.6.33". Retrieved 2016-01-16.

- ↑ "Radeon feature matrix". freedesktop.org. Retrieved 2016-01-10.

- ↑ Deucher, Alexander (2015-09-16). "XDC2015: AMDGPU" (PDF). Retrieved 2016-01-16.

- 1 2 Michel Dänzer (2016-11-17). "[ANNOUNCE] xf86-video-amdgpu 1.2.0". lists.x.org.

- ↑ "ARM Bifrost GPU Architecture". 2016-05-30.

External links

| Wikimedia Commons has media related to Heterogeneous System Architecture. |

- HSA Heterogeneous System Architecture Overview on YouTube by Vinod Tipparaju at SC13 in November 2013

- HSA and the software ecosystem

- 2012 – HSA by Michael Houston