Conditional independence

In probability theory, two random events and are conditionally independent given a third event precisely if the occurrence of and the occurrence of are independent events in their conditional probability distribution given . In other words, and are conditionally independent given if and only if, given knowledge that occurs, knowledge of whether occurs provides no information on the likelihood of occurring, and knowledge of whether occurs provides no information on the likelihood of occurring.

| Part of a series on statistics |

| Probability theory |

|---|

|

The concept of conditional independence can be extended from random events to random variables and random vectors.

Conditional independence of events

Definition

In the standard notation of probability theory, and are conditionally independent given if and only if . Conditional independence of and given is denoted by . Formally:

|

(Eq.1) |

or equivalently,

Examples

The discussion on StackExchange provides a couple of useful examples. See below.[1]

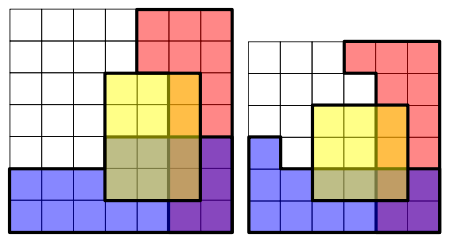

Coloured boxes

Each cell represents a possible outcome. The events , and are represented by the areas shaded red, blue and yellow respectively. The overlap between the events and is shaded purple.

The probabilities of these events are shaded areas with respect to the total area. In both examples and are conditionally independent given because:

but not conditionally independent given because:

Weather and delays

Let the two events be the probabilities of persons A and B getting home in time for dinner, and the third event is the fact that a snow storm hit the city. While both A and B have a lower probability of getting home in time for dinner, the lower probabilities will still be independent of each other. That is, the knowledge that A is late does not tell you whether B will be late. (They may be living in different neighborhoods, traveling different distances, and using different modes of transportation.) However, if you have information that they live in the same neighborhood, use the same transportation, and work at the same place, then the two events are NOT conditionally independent.

Dice rolling

Conditional independence depends on the nature of the third event. If you roll two dice, one may assume that the two dice behave independently of each other. Looking at the results of one die will not tell you about the result of the second die. (That is, the two dice are independent.) If, however, the 1st die's result is a 3, and someone tells you about a third event - that the sum of the two results is even - then this extra unit of information restricts the options for the 2nd result to an odd number. In other words, two events can be independent, but NOT conditionally independent.

Height and vocabulary of children

Height and vocabulary are independent; but they are conditionally not independent if you add age.

Conditional independence of random variables

Two random variables and are conditionally independent given a third random variable if and only if they are independent in their conditional probability distribution given . That is, and are conditionally independent given if and only if, given any value of , the probability distribution of is the same for all values of and the probability distribution of is the same for all values of . Formally:

|

(Eq.2) |

where is the conditional cumulative distribution function of and given .

Two events and are conditionally independent given a σ-algebra if

where denotes the conditional expectation of the indicator function of the event , , given the sigma algebra . That is,

Two random variables and are conditionally independent given a σ-algebra if the above equation holds for all in and B in .

Two random variables and are conditionally independent given a random variable if they are independent given σ(W): the σ-algebra generated by . This is commonly written:

- or

This is read " is independent of , given "; the conditioning applies to the whole statement: "( is independent of ) given ".

If assumes a countable set of values, this is equivalent to the conditional independence of X and Y for the events of the form . Conditional independence of more than two events, or of more than two random variables, is defined analogously.

The following two examples show that neither implies nor is implied by . First, suppose is 0 with probability 0.5 and 1 otherwise. When W = 0 take and to be independent, each having the value 0 with probability 0.99 and the value 1 otherwise. When , and are again independent, but this time they take the value 1 with probability 0.99. Then . But and are dependent, because Pr(X = 0) < Pr(X = 0|Y = 0). This is because Pr(X = 0) = 0.5, but if Y = 0 then it's very likely that W = 0 and thus that X = 0 as well, so Pr(X = 0|Y = 0) > 0.5. For the second example, suppose , each taking the values 0 and 1 with probability 0.5. Let be the product . Then when , Pr(X = 0) = 2/3, but Pr(X = 0|Y = 0) = 1/2, so is false. This is also an example of Explaining Away. See Kevin Murphy's tutorial [3] where and take the values "brainy" and "sporty".

Conditional independence of random vectors

Two random vectors and are conditionally independent given a third random vector if and only if they are independent in their conditional cumulative distribution given . Formally:

|

(Eq.3) |

where , and and the conditional cumulative distributions are defined as follows.

Uses in Bayesian inference

Let p be the proportion of voters who will vote "yes" in an upcoming referendum. In taking an opinion poll, one chooses n voters randomly from the population. For i = 1, ..., n, let Xi = 1 or 0 corresponding, respectively, to whether or not the ith chosen voter will or will not vote "yes".

In a frequentist approach to statistical inference one would not attribute any probability distribution to p (unless the probabilities could be somehow interpreted as relative frequencies of occurrence of some event or as proportions of some population) and one would say that X1, ..., Xn are independent random variables.

By contrast, in a Bayesian approach to statistical inference, one would assign a probability distribution to p regardless of the non-existence of any such "frequency" interpretation, and one would construe the probabilities as degrees of belief that p is in any interval to which a probability is assigned. In that model, the random variables X1, ..., Xn are not independent, but they are conditionally independent given the value of p. In particular, if a large number of the Xs are observed to be equal to 1, that would imply a high conditional probability, given that observation, that p is near 1, and thus a high conditional probability, given that observation, that the next X to be observed will be equal to 1.

Rules of conditional independence

A set of rules governing statements of conditional independence have been derived from the basic definition.[4][5]

Note: since these implications hold for any probability space, they will still hold if one considers a sub-universe by conditioning everything on another variable, say K. For example, would also mean that .

Note: below, the comma can be read as an "AND".

Symmetry

Decomposition

Proof:

- (meaning of )

- (ignore variable B by integrating it out)

A similar proof shows the independence of X and B.

Weak union

Proof:

- By definition, .

- Due to the property of decomposition , .

- Combining the above two equalities gives , which establishes .

The second condition can be proved similarly.

Contraction

Proof:

This property can be proved by noticing , each equality of which is asserted by and , respectively.

Contraction-weak-union-decomposition

Putting the above three together, we have:

References

- Could someone explain conditional independence?

- To see that this is the case, one needs to realise that Pr(R ∩ B | Y) is the probability of an overlap of R and B (the purple shaded area) in the Y area. Since, in the picture on the left, there are two squares where R and B overlap within the Y area, and the Y area has twelve squares, Pr(R ∩ B | Y) = 2/12 = 1/6. Similarly, Pr(R | Y) = 4/12 = 1/3 and Pr(B | Y) = 6/12 = 1/2.

- http://people.cs.ubc.ca/~murphyk/Bayes/bnintro.html

- Dawid, A. P. (1979). "Conditional Independence in Statistical Theory". Journal of the Royal Statistical Society, Series B. 41 (1): 1–31. JSTOR 2984718. MR 0535541.

- J Pearl, Causality: Models, Reasoning, and Inference, 2000, Cambridge University Press

- Pearl, Judea; Paz, Azaria (1985). "Graphoids: A Graph-Based Logic for Reasoning About Relevance Relations". Missing or empty

|url=(help) - Pearl, Judea (1988). Probabilistic reasoning in intelligent systems: networks of plausible inference. Morgan Kaufmann.

External links