Timeline of machine learning

This page is a timeline of machine learning. Major discoveries, achievements, milestones and other major events are included.

Overview

| Decade | Summary |

|---|---|

| <1950s | Statistical methods are discovered and refined. |

| 1950s | Pioneering machine learning research is conducted using simple algorithms. |

| 1960s | Bayesian methods are introduced for probabilistic inference in machine learning.[1] |

| 1970s | 'AI Winter' caused by pessimism about machine learning effectiveness. |

| 1980s | Rediscovery of backpropagation causes a resurgence in machine learning research. |

| 1990s | Work on Machine learning shifts from a knowledge-driven approach to a data-driven approach. Scientists begin creating programs for computers to analyze large amounts of data and draw conclusions – or "learn" – from the results.[2] Support vector machines (SVMs) and [3]recurrent neural networks (RNNs) become popular. The fields of [4] computational complexity via neural networks and super-Turing computation started. |

| 2000s | Support Vector Clustering [5] and other Kernel methods [6] and unsupervised machine learning methods become widespread.[7] |

| 2010s | Deep learning becomes feasible, which leads to machine learning becoming integral to many widely used software services and applications. |

Timeline

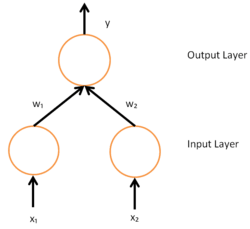

A simple neural network with two input units and one output unit

| Year | Event type | Caption | Event |

|---|---|---|---|

| 1763 | Discovery | The Underpinnings of Bayes' Theorem | Thomas Bayes's work An Essay towards solving a Problem in the Doctrine of Chances is published two years after his death, having been amended and edited by a friend of Bayes, Richard Price.[8] The essay presents work which underpins Bayes theorem. |

| 1805 | Discovery | Least Square | Adrien-Marie Legendre describes the "méthode des moindres carrés", known in English as the least squares method.[9] The least squares method is used widely in data fitting. |

| 1812 | Bayes' Theorem | Pierre-Simon Laplace publishes Théorie Analytique des Probabilités, in which he expands upon the work of Bayes and defines what is now known as Bayes' Theorem.[10] | |

| 1913 | Discovery | Markov Chains | Andrey Markov first describes techniques he used to analyse a poem. The techniques later become known as Markov chains.[11] |

| 1950 | Turing's Learning Machine | Alan Turing proposes a 'learning machine' that could learn and become artificially intelligent. Turing's specific proposal foreshadows genetic algorithms.[12] | |

| 1951 | First Neural Network Machine | Marvin Minsky and Dean Edmonds build the first neural network machine, able to learn, the SNARC.[13] | |

| 1952 | Machines Playing Checkers | Arthur Samuel joins IBM's Poughkeepsie Laboratory and begins working on some of the very first machine learning programs, first creating programs that play checkers.[14] | |

| 1957 | Discovery | Perceptron | Frank Rosenblatt invents the perceptron while working at the Cornell Aeronautical Laboratory.[15] The invention of the perceptron generated a great deal of excitement and was widely covered in the media.[16] |

| 1963 | Achievement | Machines Playing Tic-Tac-Toe | Donald Michie creates a 'machine' consisting of 304 match boxes and beads, which uses reinforcement learning to play Tic-tac-toe (also known as noughts and crosses).[17] |

| 1967 | Nearest Neighbor | The nearest neighbor algorithm was created, which is the start of basic pattern recognition. The algorithm was used to map routes.[2] | |

| 1969 | Limitations of Neural Networks | Marvin Minsky and Seymour Papert publish their book Perceptrons, describing some of the limitations of perceptrons and neural networks. The interpretation that the book shows that neural networks are fundamentally limited is seen as a hindrance for research into neural networks.[18][19] | |

| 1970 | Automatic Differentiation (Backpropagation) | Seppo Linnainmaa publishes the general method for automatic differentiation (AD) of discrete connected networks of nested differentiable functions.[20][21] This corresponds to the modern version of backpropagation, but is not yet named as such.[22][23][24][25] | |

| 1979 | Stanford Cart | Students at Stanford University develop a cart that can navigate and avoid obstacles in a room.[2] | |

| 1979 | Discovery | Neocognitron | Kunihiko Fukushima first publishes his work on the neocognitron, a type of artificial neural network (ANN).[26][27] Neocognition later inspires convolutional neural networks (CNNs).[28] |

| 1981 | Explanation Based Learning | Gerald Dejong introduces Explanation Based Learning, where a computer algorithm analyses data and creates a general rule it can follow and discard unimportant data.[2] | |

| 1982 | Discovery | Recurrent Neural Network | John Hopfield popularizes Hopfield networks, a type of recurrent neural network that can serve as content-addressable memory systems.[29] |

| 1985 | NetTalk | A program that learns to pronounce words the same way a baby does, is developed by Terry Sejnowski.[2] | |

| 1986 | Application | Backpropagation | Seppo Linnainmaa's reverse mode of automatic differentiation (first applied to neural networks by Paul Werbos) is used in experiments by David Rumelhart, Geoff Hinton and Ronald J. Williams to learn internal representations.[30] |

| 1989 | Discovery | Reinforcement Learning | Christopher Watkins develops Q-learning, which greatly improves the practicality and feasibility of reinforcement learning.[31] |

| 1989 | Commercialization | Commercialization of Machine Learning on Personal Computers | Axcelis, Inc. releases Evolver, the first software package to commercialize the use of genetic algorithms on personal computers.[32] |

| 1992 | Achievement | Machines Playing Backgammon | Gerald Tesauro develops TD-Gammon, a computer backgammon program that uses an artificial neural network trained using temporal-difference learning (hence the 'TD' in the name). TD-Gammon is able to rival, but not consistently surpass, the abilities of top human backgammon players.[33] |

| 1995 | Discovery | Random Forest Algorithm | Tin Kam Ho publishes a paper describing random decision forests.[34] |

| 1995 | Discovery | Support Vector Machines | Corinna Cortes and Vladimir Vapnik publish their work on support vector machines.[35][36] |

| 1997 | Achievement | IBM Deep Blue Beats Kasparov | IBM's Deep Blue beats the world champion at chess.[2] |

| 1997 | Discovery | LSTM | Sepp Hochreiter and Jürgen Schmidhuber invent long short-term memory (LSTM) recurrent neural networks,[37] greatly improving the efficiency and practicality of recurrent neural networks. |

| 1998 | MNIST database | A team led by Yann LeCun releases the MNIST database, a dataset comprising a mix of handwritten digits from American Census Bureau employees and American high school students.[38] The MNIST database has since become a benchmark for evaluating handwriting recognition. | |

| 2002 | Torch Machine Learning Library | Torch, a software library for machine learning, is first released.[39] | |

| 2006 | The Netflix Prize | The Netflix Prize competition is launched by Netflix. The aim of the competition was to use machine learning to beat Netflix's own recommendation software's accuracy in predicting a user's rating for a film given their ratings for previous films by at least 10%.[40] The prize was won in 2009. | |

| 2009 | Achievement | ImageNet | ImageNet is created. ImageNet is a large visual database envisioned by Fei-Fei Li from Stanford University, who realized that the best machine learning algorithms wouldn't work well if the data didn't reflect the real world.[41] For many, ImageNet was the catalyst for the AI boom[42] of the 21st century. |

| 2010 | Kaggle Competition | Kaggle, a website that serves as a platform for machine learning competitions, is launched.[43] | |

| 2010 | Wall Street Journal Profiles Machine Learning Investing | The WSJ Profiles new wave of investing and focuses on RebellionResearch.com which would be the subject of author Scott Patterson's Novel, Dark Pools.[44] | |

| 2011 | Achievement | Beating Humans in Jeopardy | Using a combination of machine learning, natural language processing and information retrieval techniques, IBM's Watson beats two human champions in a Jeopardy! competition.[45] |

| 2012 | Achievement | Recognizing Cats on YouTube | The Google Brain team, led by Andrew Ng and Jeff Dean, create a neural network that learns to recognize cats by watching unlabeled images taken from frames of YouTube videos.[46][47] |

| 2014 | Leap in Face Recognition | Facebook researchers publish their work on DeepFace, a system that uses neural networks that identifies faces with 97.35% accuracy. The results are an improvement of more than 27% over previous systems and rivals human performance.[48] | |

| 2014 | Sibyl | Researchers from Google detail their work on Sibyl,[49] a proprietary platform for massively parallel machine learning used internally by Google to make predictions about user behavior and provide recommendations.[50] | |

| 2016 | Achievement | Beating Humans in Go | Google's AlphaGo program becomes the first Computer Go program to beat an unhandicapped professional human player[51] using a combination of machine learning and tree search techniques.[52] Later improved as AlphaGo Zero and then in 2017 generalized to Chess and more two-player games with AlphaZero. |

See also

References

- Solomonoff, Ray J. "A formal theory of inductive inference. Part II." Information and control 7.2 (1964): 224–254.

- Marr, Bernard. "A Short History of Machine Learning – Every Manager Should Read". Forbes. Retrieved 28 Sep 2016.

- Siegelmann, Hava; Sontag, Eduardo (1995). "Computational Power of Neural Networks". Journal of Computer and System Sciences. 50 (1): 132–150. doi:10.1006/jcss.1995.1013.

- Siegelmann, Hava (1995). "Computation Beyond the Turing Limit". Journal of Computer and System Sciences. 238 (28): 632–637. Bibcode:1995Sci...268..545S. doi:10.1126/science.268.5210.545. PMID 17756722.

- Ben-Hur, Asa; Horn, David; Siegelmann, Hava; Vapnik, Vladimir (2001). "Support vector clustering". Journal of Machine Learning Research. 2: 51–86.

- Hofmann, Thomas; Schölkopf, Bernhard; Smola, Alexander J. (2008). "Kernel methods in machine learning". The Annals of Statistics. 36 (3): 1171–1220. doi:10.1214/009053607000000677. JSTOR 25464664.

- Bennett, James; Lanning, Stan (2007). "The netflix prize" (PDF). Proceedings of KDD Cup and Workshop 2007.

- Bayes, Thomas (1 January 1763). "An Essay towards solving a Problem in the Doctrine of Chance". Philosophical Transactions. 53: 370–418. doi:10.1098/rstl.1763.0053. JSTOR 105741.

- Legendre, Adrien-Marie (1805). Nouvelles méthodes pour la détermination des orbites des comètes (in French). Paris: Firmin Didot. p. viii. Retrieved 13 June 2016.

- O'Connor, J J; Robertson, E F. "Pierre-Simon Laplace". School of Mathematics and Statistics, University of St Andrews, Scotland. Retrieved 15 June 2016.

- Hayes, Brian (2013). "First Links in the Markov Chain". American Scientist. Sigma Xi, The Scientific Research Society. 101 (March–April 2013): 92. doi:10.1511/2013.101.1. Retrieved 15 June 2016.

Delving into the text of Alexander Pushkin's novel in verse Eugene Onegin, Markov spent hours sifting through patterns of vowels and consonants. On January 23, 1913, he summarized his findings in an address to the Imperial Academy of Sciences in St. Petersburg. His analysis did not alter the understanding or appreciation of Pushkin's poem, but the technique he developed—now known as a Markov chain—extended the theory of probability in a new direction.

- Turing, Alan (October 1950). "Computing Machinery and Intelligence". Mind. 59 (236): 433–460. doi:10.1093/mind/LIX.236.433. Retrieved 8 June 2016.

- Crevier 1993, pp. 34–35 and Russell & Norvig 2003, p. 17

- McCarthy, John; Feigenbaum, Ed. "Arthur Samuel: Pioneer in Machine Learning". AI Magazine (3). Association for the Advancement of Artificial Intelligence. p. 10. Retrieved 5 June 2016.

- Rosenblatt, Frank (1958). "The perceptron: A probabilistic model for information storage and organization in the brain" (PDF). Psychological Review. 65 (6): 386–408. doi:10.1037/h0042519. PMID 13602029.

- Mason, Harding; Stewart, D; Gill, Brendan (6 December 1958). "Rival". The New Yorker. Retrieved 5 June 2016.

- Child, Oliver (13 March 2016). "Menace: the Machine Educable Noughts And Crosses Engine Read". Chalkdust Magazine. Retrieved 16 Jan 2018.

- Cohen, Harvey. "The Perceptron". Retrieved 5 June 2016.

- Colner, Robert (4 March 2016). "A brief history of machine learning". SlideShare. Retrieved 5 June 2016.

- Seppo Linnainmaa (1970). "The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors." Master's Thesis (in Finnish), Univ. Helsinki, 6–7.

- Linnainmaa, Seppo (1976). "Taylor expansion of the accumulated rounding error". BIT Numerical Mathematics. 16 (2): 146–160. doi:10.1007/BF01931367.

- Griewank, Andreas (2012). "Who Invented the Reverse Mode of Differentiation?". Documenta Matematica, Extra Volume ISMP: 389–400.

- Griewank, Andreas and Walther, A. Principles and Techniques of Algorithmic Differentiation, Second Edition. SIAM, 2008.

- Schmidhuber, Jürgen (2015). "Deep learning in neural networks: An overview". Neural Networks. 61: 85–117. arXiv:1404.7828. Bibcode:2014arXiv1404.7828S. doi:10.1016/j.neunet.2014.09.003. PMID 25462637.

- Schmidhuber, Jürgen (2015). "Deep Learning (Section on Backpropagation)". Scholarpedia. 10 (11): 32832. Bibcode:2015SchpJ..1032832S. doi:10.4249/scholarpedia.32832.

- Fukushima, Kunihiko (October 1979). "位置ずれに影響されないパターン認識機構の神経回路のモデル --- ネオコグニトロン ---" [Neural network model for a mechanism of pattern recognition unaffected by shift in position — Neocognitron —]. Trans. IECE (in Japanese). J62-A (10): 658–665.

- Fukushima, Kunihiko (April 1980). "Neocognitron: A Self-organizing Neural Network Model for a Mechanism of Pattern The Recognitron Unaffected by Shift in Position" (PDF). Biological Cybernetics. 36 (4): 193–202. doi:10.1007/bf00344251. PMID 7370364. Retrieved 5 June 2016.

- Le Cun, Yann. "Deep Learning". CiteSeerX 10.1.1.297.6176. Cite journal requires

|journal=(help) - Hopfield, John (April 1982). "Neural networks and physical systems with emergent collective computational abilities" (PDF). Proceedings of the National Academy of Sciences of the United States of America. 79 (8): 2554–2558. Bibcode:1982PNAS...79.2554H. doi:10.1073/pnas.79.8.2554. PMC 346238. PMID 6953413. Retrieved 8 June 2016.

- Rumelhart, David; Hinton, Geoffrey; Williams, Ronald (9 October 1986). "Learning representations by back-propagating errors" (PDF). Nature. 323 (6088): 533–536. Bibcode:1986Natur.323..533R. doi:10.1038/323533a0. Retrieved 5 June 2016.

- Watksin, Christopher (1 May 1989). "Learning from Delayed Rewards" (PDF). Cite journal requires

|journal=(help) - Markoff, John (29 August 1990). "BUSINESS TECHNOLOGY; What's the Best Answer? It's Survival of the Fittest". New York Times. Retrieved 8 June 2016.

- Tesauro, Gerald (March 1995). "Temporal Difference Learning and TD-Gammon". Communications of the ACM. 38 (3): 58–68. doi:10.1145/203330.203343.

- Ho, Tin Kam (August 1995). "Random Decision Forests" (PDF). Proceedings of the Third International Conference on Document Analysis and Recognition. Montreal, Quebec: IEEE. 1: 278–282. doi:10.1109/ICDAR.1995.598994. ISBN 0-8186-7128-9. Retrieved 5 June 2016.

- Golge, Eren. "BRIEF HISTORY OF MACHINE LEARNING". A Blog From a Human-engineer-being. Retrieved 5 June 2016.

- Cortes, Corinna; Vapnik, Vladimir (September 1995). "Support-vector networks". Machine Learning. Kluwer Academic Publishers. 20 (3): 273–297. doi:10.1007/BF00994018. ISSN 0885-6125.

- Hochreiter, Sepp; Schmidhuber, Jürgen (1997). "Long Short-Term Memory" (PDF). Neural Computation. 9 (8): 1735–1780. doi:10.1162/neco.1997.9.8.1735. PMID 9377276. Archived from the original (PDF) on 2015-05-26.

- LeCun, Yann; Cortes, Corinna; Burges, Christopher. "THE MNIST DATABASE of handwritten digits". Retrieved 16 June 2016.

- Collobert, Ronan; Benigo, Samy; Mariethoz, Johnny (30 October 2002). "Torch: a modular machine learning software library" (PDF). Retrieved 5 June 2016. Cite journal requires

|journal=(help) - "The Netflix Prize Rules". Netflix Prize. Netflix. Archived from the original on 3 March 2012. Retrieved 16 June 2016.

- Gershgorn, Dave. "ImageNet: the data that spawned the current AI boom — Quartz". qz.com. Retrieved 2018-03-30.

- Hardy, Quentin (2016-07-18). "Reasons to Believe the A.I. Boom Is Real". The New York Times. ISSN 0362-4331. Retrieved 2018-03-30.

- "About". Kaggle. Kaggle Inc. Retrieved 16 June 2016.

- "About".

- Markoff, John (17 February 2011). "Computer Wins on 'Jeopardy!': Trivial, It's Not". New York Times. p. A1. Retrieved 5 June 2016.

- Le, Quoc V.; Ranzato, Marc'Aurelio; Monga, Rajat; Devin, Matthieu; Corrado, Greg; Chen, Kai; Dean, Jeffrey; Ng, Andrew Y. (2012). "Building high-level features using large scale unsupervised learning" (PDF). Proceedings of the 29th International Conference on Machine Learning, ICML 2012, Edinburgh, Scotland, UK, June 26 - July 1, 2012. icml.cc / Omnipress. arXiv:1112.6209. Bibcode:2011arXiv1112.6209L.

- Markoff, John (26 June 2012). "How Many Computers to Identify a Cat? 16,000". New York Times. p. B1. Retrieved 5 June 2016.

- Taigman, Yaniv; Yang, Ming; Ranzato, Marc'Aurelio; Wolf, Lior (24 June 2014). "DeepFace: Closing the Gap to Human-Level Performance in Face Verification". Conference on Computer Vision and Pattern Recognition. Retrieved 8 June 2016.

- Canini, Kevin; Chandra, Tushar; Ie, Eugene; McFadden, Jim; Goldman, Ken; Gunter, Mike; Harmsen, Jeremiah; LeFevre, Kristen; Lepikhin, Dmitry; Llinares, Tomas Lloret; Mukherjee, Indraneel; Pereira, Fernando; Redstone, Josh; Shaked, Tal; Singer, Yoram. "Sibyl: A system for large scale supervised machine learning" (PDF). Jack Baskin School of Engineering. UC Santa Cruz. Retrieved 8 June 2016.

- Woodie, Alex (17 July 2014). "Inside Sibyl, Google's Massively Parallel Machine Learning Platform". Datanami. Tabor Communications. Retrieved 8 June 2016.

- "Google achieves AI 'breakthrough' by beating Go champion". BBC News. BBC. 27 January 2016. Retrieved 5 June 2016.

- "AlphaGo". Google DeepMind. Google Inc. Retrieved 5 June 2016.

This article is issued from Wikipedia. The text is licensed under Creative Commons - Attribution - Sharealike. Additional terms may apply for the media files.