Spiking neural network

Spiking neural networks (SNNs) are artificial neural networks that more closely mimic natural neural networks.[1] In addition to neuronal and synaptic state, SNNs incorporate the concept of time into their operating model. The idea is that neurons in the SNN do not fire at each propagation cycle (as it happens with typical multi-layer perceptron networks), but rather fire only when a membrane potential – an intrinsic quality of the neuron related to its membrane electrical charge – reaches a specific value. When a neuron fires, it generates a signal that travels to other neurons which, in turn, increase or decrease their potentials in accordance with this signal.

In the context of spiking neural networks, the current activation level (modeled as a differential equation) is normally considered to be the neuron's state, with incoming spikes pushing this value higher, eventually either firing or decaying. Various coding methods exist for interpreting the outgoing spike train as a real-value number, relying on either the frequency of spikes, or the interval between spikes, to encode information.

History

Artificial neural networks are usually fully connected, receiving input from every neuron in the previous layer and signalling every neuron in the subsequent layer. Although these networks have achieved breakthroughs in many fields, they are biologically inaccurate and do not mimic the operation mechanism of neurons in the brain of a living thing.[2]

The biologically-inspired Hodgkin–Huxley model of a spiking neuron was proposed in 1952. This model describes how action potentials are initiated and propagated. Communication between neurons, which requires the exchange of chemical neurotransmitters in the synaptic gap, is described in various models, such as the integrate-and-fire model, FitzHugh–Nagumo model (1961–1962), and Hindmarsh–Rose model (1984).

On July 2019 at the DARPA Electronics Resurgence Initiative summit, Intel unveiled an 8-million-neuron neuromorphic system comprising 64 Loihi research chips.[3]

Underpinnings

From the information theory perspective, the problem is to explain how information is encoded and decoded by a series of trains of pulses, i.e. action potentials. Thus, a fundamental question of neuroscience is to determine whether neurons communicate by a rate or temporal code.[4] Temporal coding suggests that a single spiking neuron can replace hundreds of hidden units on a sigmoidal neural net.[1]

A spiking neural network considers temporal information. The idea is that not all neurons are activated in every iteration of propagation (as is the case in a typical multilayer perceptron network), but only when its membrane potential reaches a certain value. When a neuron is activated, it produces a signal that is passed to connected neurons, raising or lowering their membrane potential.

In a spiking neural network, the neuron's current state is defined as its level of activation (modeled as a differential equation). An input pulse causes the current state value to rise for a period of time and then gradually decline. Encoding schemes have been constructed to interpret these output pulse sequences as a number, taking into account both pulse frequency and pulse interval. A neural network model based on pulse generation time can be established accurately. Spike coding is adopted in this new neural network. Using the exact time of pulse occurrence, a neural network can employ more information and offer stronger computing power.

Pulse-coupled neural networks (PCNN) are often confused with SNNs. A PCNN can be seen as a kind of SNN.

The SNN approach uses a binary output (signal/no signal) instead of the continuous output of traditional ANNs. Further, pulse trainings are not easily interpretable. But pulse training increases the ability to process spatiotemporal data (or real-world sensory data). Space refers to the fact that neurons connect only to nearby neurons so that they can process input blocks separately (similar to CNN using filters). Time refers to the fact that pulse training occurs over time so that the information lost in binary coding can be retrieved from the time information. This avoids the additional complexity of a recurrent neural network (RNN). It turns out that impulse neurons are more powerful computational units than traditional artificial neurons.[2]

SNN is theoretically more powerful than second-generation networks, however SNN training issues and hardware requirements limit their use. Although unsupervised biological learning methods are available, such as Hebbian learning and STDP, no effective supervised training method is suitable for SNN that can provide better performance than second-generation networks. Further pulse training is not differentiable, eliminating backpropagation-based training methods like gradient descent. Therefore, in order to correctly use SNN to solve real-world tasks, an efficient supervised learning method is required, probably one that mirrors learning in brains.[2]

SNNs have much larger computational costs for simulating realistic neural models than traditional ANNs.

Applications

SNNs can in principle apply to the same applications as traditional ANNs.[5] In addition, SNNs can model the central nervous system of biological organisms, such as an insect seeking food without prior knowledge of the environment.[6] Due to their relative realism, they can be used to study the operation of biological neural circuits. Starting with a hypothesis about the topology of a biological neuronal circuit and its function, recordings of this circuit can be compared to the output of the corresponding SNN, evaluating the plausibility of the hypothesis. However, there is a lack of effective training mechanisms for SNNs, which can be inhibitory for some applications, including computer vision tasks.

As of 2019 SNNs lag ANNs in terms of accuracy, but the gap is decreasing, and has vanished on some tasks.[7]

Software

A diverse range of application software can simulate SNNs. This software can be classified according to its uses:

SNN simulation

These simulate complex neural models with a high level of detail and accuracy. Large networks usually require lengthy processing. Candidates include:[8]

- GENESIS (the GEneral NEural SImulation System[9]) – developed in James Bower's laboratory at Caltech;

- NEURON – mainly developed by Michael Hines, John W. Moore and Ted Carnevale in Yale University and Duke University;

- Brian – developed by Romain Brette and Dan Goodman at the École Normale Supérieure;

- NEST – developed by the NEST Initiative;

- BindsNET – developed by the Biologically Inspired Neural and Dynamical Systems (BINDS) lab at the University of Massachusetts Amherst.[10]

Hardware

Future neuromorphic architectures[11] will comprise billions of such nanosynapses, which require a clear understanding of the physical mechanisms responsible for plasticity. Experiemental systems based on ferroelectric tunnel junctions have been used to show that STDP can be harnessed from heterogeneous polarization switching. Through combined scanning probe imaging, electrical transport and atomic-scale molecular dynamics, conductance variations can be modelled by nucleation-dominated reversal of domains. Simulations show that arrays of ferroelectric nanosynapses can autonomously learn to recognize patterns in a predictable way, opening the path towards unsupervised learning.[12]

- Neurogrid is a board that can simulate spiking neural networks directly in hardware. (Stanford University)

- SpiNNaker (Spiking Neural Network Architecture) uses ARM processors as the building blocks of a massively parallel computing platform based on a six-layer thalamocortical model. (University of Manchester)[13]

- TrueNorth is a processor that contains 5.4 billion transistors that consumes only 70 milliwatts; most processors in personal computers contain about 1.4 billion transistors and require 35 watts or more. IBM refers to the design principle behind TrueNorth as neuromorphic computing. Its primary purpose is pattern recognition. While critics say the chip isn't powerful enough, its supporters point out that this is only the first generation, and the capabilities of improved iterations will become clear. (IBM)[14]

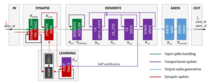

- Dynamic Neuromorphic Asynchronous Processor (DYNAP)[15] combines slow, low-power, inhomogeneous sub-threshold analog circuits, and fast programmable digital circuits. It supports reconfigurable, general-purpose, real-time neural networks of spiking neurons. This allows the implementation of real-time spike-based neural processing architectures[16] in which memory and computation are co-localized. It solves the von Neumann bottleneck problem and enables real-time multiplexed communication of spiking events for realising massive networks. Recurrent networks, feed-forward networks, convolutional networks, attractor networks, echo-state networks, deep networks, and sensor fusion networks are a few of the possibilities.[17]

- Loihi is a 60-mm chip 14-nm Intel chip that offers 128 cores and 130,000 neurons.[18] It integrates a wide range of features, such as hierarchical connectivity, dendritic compartments, synaptic delays and programmable synaptic learning rules.[19] Running a spiking convolutional form of the Locally Competitive Algorithm, Loihi can solve LASSO optimization problems with over three orders of magnitude superior energy-delay product compared to conventional solvers running on a CPU isoprocess/voltage/area.[20] A 64 Loihi research system offers 8-million-neuron neuromorphic system. Loihi is about 1,000 times as fast as a CPU and 10,000 times as energy efficient.[3]

- BrainScaleS is based on physical emulations of neuron, synapse and plasticity models with digital connectivity, running up to ten thousand times faster than real time. It was developed by the European Human Brain Project. The SpiNNaker system is based on numerical models running in real time on custom digital multicore chips using the ARM architecture. It provides custom digital chips, each with eighteen cores and a shared local 128 Mbyte RAM, with a total of over 1,000,000 cores.[21]

Benchmarks

Classification capabilities of spiking networks trained according to unsupervised learning methods[22] have been tested on the common benchmark datasets, such as, Iris, Wisconsin Breast Cancer or Statlog Landsat dataset.[23][24][25] Various approaches to information encoding and network design have been used. For example, a 2-layer feedforward network for data clustering and classification. Based on the idea proposed in Hopfield (1995) the authors implemented models of local receptive fields combining the properties of radial basis functions (RBF) and spiking neurons to convert input signals (classified data) having a floating-point representation into a spiking representation.[26][27]

See also

References

- Maass, Wolfgang (1997). "Networks of spiking neurons: The third generation of neural network models". Neural Networks. 10 (9): 1659–1671. doi:10.1016/S0893-6080(97)00011-7. ISSN 0893-6080.

- "Spiking Neural Networks, the Next Generation of Machine Learning". 16 July 2019.

- https://spectrum.ieee.org/tech-talk/robotics/artificial-intelligence/intels-neuromorphic-system-hits-8-million-neurons-100-million-coming-by-2020.amp.html Intel’s Neuromorphic System Hits 8 Million Neurons, 100 Million Coming by 2020

- Wulfram Gerstner (2001). "Spiking Neurons". In Wolfgang Maass; Christopher M. Bishop (eds.). Pulsed Neural Networks. MIT Press. ISBN 978-0-262-63221-8.

- Alnajjar, F.; Murase, K. (2008). "A simple Aplysia-like spiking neural network to generate adaptive behavior in autonomous robots". Adaptive Behavior. 14 (5): 306–324. doi:10.1177/1059712308093869.

- X Zhang; Z Xu; C Henriquez; S Ferrari (Dec 2013). Spike-based indirect training of a spiking neural network-controlled virtual insect. IEEE Decision and Control. pp. 6798–6805. CiteSeerX 10.1.1.671.6351. doi:10.1109/CDC.2013.6760966. ISBN 978-1-4673-5717-3.

- Tavanaei, Amirhossein; Ghodrati, Masoud; Kheradpisheh, Saeed Reza; Masquelier, Timothée; Maida, Anthony (March 2019). "Deep learning in spiking neural networks". Neural Networks. 111: 47–63. arXiv:1804.08150. doi:10.1016/j.neunet.2018.12.002. PMID 30682710.

- Abbott, L. F.; Nelson, Sacha B. (November 2000). "Synaptic plasticity: taming the beast". Nature Neuroscience. 3 (S11): 1178–1183. doi:10.1038/81453. PMID 11127835.

- Atiya, A.F.; Parlos, A.G. (May 2000). "New results on recurrent network training: unifying the algorithms and accelerating convergence". IEEE Transactions on Neural Networks. 11 (3): 697–709. doi:10.1109/72.846741. PMID 18249797.

- "Hananel-Hazan/bindsnet: Simulation of spiking neural networks (SNNs) using PyTorch". 31 March 2020.

- Sutton RS, Barto AG (2002) Reinforcement Learning: An Introduction. Bradford Books, MIT Press, Cambridge, MA.

- Boyn, S.; Grollier, J.; Lecerf, G. (2017-04-03). "Learning through ferroelectric domain dynamics in solid-state synapses". Nature Communications. 8: 14736. Bibcode:2017NatCo...814736B. doi:10.1038/ncomms14736. PMC 5382254. PMID 28368007.

- Xin Jin; Furber, Steve B.; Woods, John V. (2008). "Efficient modelling of spiking neural networks on a scalable chip multiprocessor". 2008 IEEE International Joint Conference on Neural Networks (IEEE World Congress on Computational Intelligence). pp. 2812–2819. doi:10.1109/IJCNN.2008.4634194. ISBN 978-1-4244-1820-6.

- Markoff, John, A new chip functions like a brain, IBM says, New York Times, August 8, 2014, p.B1

- Sayenko, Dimitry G.; Vette, Albert H.; Kamibayashi, Kiyotaka; Nakajima, Tsuyoshi; Akai, Masami; Nakazawa, Kimitaka (March 2007). "Facilitation of the soleus stretch reflex induced by electrical excitation of plantar cutaneous afferents located around the heel". Neuroscience Letters. 415 (3): 294–298. doi:10.1016/j.neulet.2007.01.037. PMID 17276004.

- Schrauwen B, Campenhout JV (2004) Improving spikeprop: enhancements to an error-backpropagation rule for spiking neural networks. In: Proceedings of 15th ProRISC Workshop, Veldhoven, the Netherlands

- Indiveri, Giacomo; Corradi, Federico; Qiao, Ning (2015). "Neuromorphic architectures for spiking deep neural networks". 2015 IEEE International Electron Devices Meeting (IEDM). pp. 4.2.1–4.2.4. doi:10.1109/IEDM.2015.7409623. ISBN 978-1-4673-9894-7.

- "Neuromorphic Computing - Next Generation of AI". Intel. Retrieved 2019-07-22.

- Yamazaki, Tadashi; Tanaka, Shigeru (17 October 2007). "A spiking network model for passage-of-time representation in the cerebellum". European Journal of Neuroscience. 26 (8): 2279–2292. doi:10.1111/j.1460-9568.2007.05837.x. PMC 2228369. PMID 17953620.

- Davies, Mike; Srinivasa, Narayan; Lin, Tsung-Han; Chinya, Gautham; Cao, Yongqiang; Choday, Sri Harsha; Dimou, Georgios; Joshi, Prasad; Imam, Nabil; Jain, Shweta; Liao, Yuyun; Lin, Chit-Kwan; Lines, Andrew; Liu, Ruokun; Mathaikutty, Deepak; McCoy, Steven; Paul, Arnab; Tse, Jonathan; Venkataramanan, Guruguhanathan; Weng, Yi-Hsin; Wild, Andreas; Yang, Yoonseok; Wang, Hong (January 2018). "Loihi: A Neuromorphic Manycore Processor with On-Chip Learning". IEEE Micro. 38 (1): 82–99. doi:10.1109/MM.2018.112130359.

- https://www.humanbrainproject.eu/en/silicon-brains/ Neuromorphic Computing

- Ponulak, F.; Kasinski, A. (2010). "Supervised learning in spiking neural networks with ReSuMe: sequence learning, classification and spike-shifting". Neural Comput. 22 (2): 467–510. doi:10.1162/neco.2009.11-08-901. PMID 19842989.

- Newman et al. 1998

- Bohte et al. 2002a

- Belatreche et al. 2003

- Pfister, Jean-Pascal; Toyoizumi, Taro; Barber, David; Gerstner, Wulfram (June 2006). "Optimal Spike-Timing-Dependent Plasticity for Precise Action Potential Firing in Supervised Learning". Neural Computation. 18 (6): 1318–1348. arXiv:q-bio/0502037. Bibcode:2005q.bio.....2037P. doi:10.1162/neco.2006.18.6.1318. PMID 16764506.

- Bohte, et. al. (2002b)

External links

- Full text of the book Spiking Neuron Models. Single Neurons, Populations, Plasticity by Wulfram Gerstner and Werner M. Kistler (ISBN 0-521-89079-9)