Hadamard product (matrices)

In mathematics, the Hadamard product (also known as the element-wise, entrywise[1]:ch. 5 or Schur[2] product) is a binary operation that takes two matrices of the same dimensions and produces another matrix of the same dimension as the operands where each element i, j is the product of elements i, j of the original two matrices. It should not be confused with the more common matrix product. It is attributed to, and named after, either French mathematician Jacques Hadamard or German mathematician Issai Schur.

The Hadamard product is associative and distributive. Unlike the matrix product, it is also commutative.

Definition

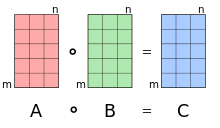

For two matrices A and B of the same dimension m × n, the Hadamard product (or [3][4][5]) is a matrix of the same dimension as the operands, with elements given by

For matrices of different dimensions (m × n and p × q, where m ≠ p or n ≠ q) the Hadamard product is undefined.

Example

For example, the Hadamard product for a 3 × 3 matrix A with a 3 × 3 matrix B is

Properties

- The Hadamard product is commutative (when working with a commutative ring), associative and distributive over addition. That is, if A, B, and C are matrices of the same size, and k is a scalar:

- The identity matrix under Hadamard multiplication of two m × n matrices is an m × n matrix where all elements are equal to 1. This is different from the identity matrix under regular matrix multiplication, where only the elements of the main diagonal are equal to 1. Furthermore, a matrix has an inverse under Hadamard multiplication if and only if none of the elements are equal to zero.[6]

- For vectors x and y, and corresponding diagonal matrices Dx and Dy with these vectors as their main diagonals, the following identity holds:[1]:479

where x* denotes the conjugate transpose of x. In particular, using vectors of ones, this shows that the sum of all elements in the Hadamard product is the trace of ABT. A related result for square A and B, is that the row-sums of their Hadamard product are the diagonal elements of ABT:[7]

Similarly

- The Hadamard product is a principal submatrix of the Kronecker product.

- The Hadamard product satisfies the rank inequality

- If A and B are positive-definite matrices, then the following inequality involving the Hadamard product is valid:[8]

- If D and E are diagonal matrices, then[9]

- The Hadamard product of two vectors and is the same as matrix multiplication of one vector by the corresponding diagonal matrix of the other vector:

Schur product theorem

The Hadamard product of two positive-semidefinite matrices is positive-semidefinite.[7] This is known as the Schur product theorem,[6] after German mathematician Issai Schur. For two positive-semidefinite matrices A and B, it is also known that the determinant of their Hadamard product is greater than or equal to the product of their respective determinants:[7]

In programming languages

Hadamard multiplication is built into certain programming languages under various names. In MATLAB, GNU Octave, GAUSS and HP Prime, it is known as array multiplication, or in Julia broadcast multiplication, with the symbol .*.[10] In Fortran, R,[11] APL, J and Wolfram Language (Mathematica), it is done through simple multiplication operator *, whereas the matrix product is done through the function matmul, %*%, +.×, +/ .* and the . operators, respectively. In Python with the NumPy numerical library or the SymPy symbolic library, multiplication of array objects as a1*a2 produces the Hadamard product, but otherwise multiplication as a1@a2 or matrix objects m1*m2 will produce a matrix product. The Eigen C++ library provides a cwiseProduct member function for the Matrix class (a.cwiseProduct(b)), while the Armadillo library uses the operator % to make compact expressions (a % b; a * b is a matrix product).

Applications

The Hadamard product appears in lossy compression algorithms such as JPEG. The decoding step involves an entry-for-entry product, in other words the Hadamard product.

It is used in the machine learning literature, for example to describe the architecture of recurrent neural networks as GRUs or LSTMs.

Analogous operations

Other Hadamard operations are also seen in the mathematical literature,[12] namely the Hadamard root and Hadamard power (which are in effect the same thing because of fractional indices), defined for a matrix such that:

For

and for

The Hadamard inverse reads:[12]

A Hadamard division is defined as:[13][14]

See also

References

- Horn, Roger A.; Johnson, Charles R. (2012). Matrix analysis. Cambridge University Press.

- Davis, Chandler (1962). "The norm of the Schur product operation". Numerische Mathematik. 4 (1): 343–44. doi:10.1007/bf01386329.

- "Hadamard product - Machine Learning Glossary". machinelearning.wtf.

- "linear algebra - What does a dot in a circle mean?". Mathematics Stack Exchange.

- "Element-wise (or pointwise) operations notation?". Mathematics Stack Exchange.

- Million, Elizabeth. "The Hadamard Product" (PDF). Retrieved 2 January 2012.

- Styan, George P. H. (1973), "Hadamard Products and Multivariate Statistical Analysis", Linear Algebra and Its Applications, 6: 217–240, doi:10.1016/0024-3795(73)90023-2, hdl:10338.dmlcz/102190

- Hiai, Fumio; Lin, Minghua (February 2017). "On an eigenvalue inequality involving the Hadamard product". Linear Algebra and Its Applications. 515: 313–320. doi:10.1016/j.laa.2016.11.017.

- "Project" (PDF). buzzard.ups.edu. 2007. Retrieved 2019-12-18.

- "Arithmetic Operators + - * / \ ^ ' -". MATLAB documentation. MathWorks. Archived from the original on 24 April 2012. Retrieved 2 January 2012.

- "Matrix multiplication". An Introduction to R. The R Project for Statistical Computing. 16 May 2013. Retrieved 24 August 2013.

- Reams, Robert (1999). "Hadamard inverses, square roots and products of almost semidefinite matrices". Linear Algebra and Its Applications. 288: 35–43. doi:10.1016/S0024-3795(98)10162-3.

- Wetzstein, Gordon; Lanman, Douglas; Hirsch, Matthew; Raskar, Ramesh. "Supplementary Material: Tensor Displays: Compressive Light Field Synthesis using Multilayer Displays with Directional Backlighting" (PDF). MIT Media Lab.

- Cyganek, Boguslaw (2013). Object Detection and Recognition in Digital Images: Theory and Practice. John Wiley & Sons. p. 109. ISBN 9781118618363.