Gated recurrent unit

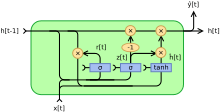

Gated recurrent units (GRUs) are a gating mechanism in recurrent neural networks, introduced in 2014 by Kyunghyun Cho et al.[1] Their performance on polyphonic music modeling and speech signal modeling was found to be similar to that of long short-term memory (LSTM). However, GRUs have been shown to exhibit better performance on smaller datasets.[2]

They have fewer parameters than LSTM, as they lack an output gate.[3]

Architecture

There are several variations on the full gated unit, with gating done using the previous hidden state and the bias in various combinations, and a simplified form called minimal gated unit.

The operator denotes the Hadamard product in the following.

Fully gated unit

Initially, for , the output vector is .

Variables

- : input vector

- : output vector

- : update gate vector

- : reset gate vector

- , and : parameter matrices and vector

- : The original is a sigmoid function.

- : The original is a hyperbolic tangent.

Alternative activation functions are possible, provided that .

Alternate forms can be created by changing and [4]

- Type 1, each gate depends only on the previous hidden state and the bias.

- Type 2, each gate depends only on the previous hidden state.

- Type 3, each gate is computed using only the bias.

Minimal gated unit

The minimal gated unit is similar to the fully gated unit, except the update and reset gate vector is merged into a forget gate. This also implies that the equation for the output vector must be changed [5]

Variables

- : input vector

- : output vector

- : forget vector

- , and : parameter matrices and vector

See also

References

- ↑ Cho, Kyunghyun; van Merrienboer, Bart; Gulcehre, Caglar; Bahdanau, Dzmitry; Bougares, Fethi; Schwenk, Holger; Bengio, Yoshua (2014). "Learning Phrase Representations using RNN Encoder-Decoder for Statistical Machine Translation". arXiv:1406.1078 [cs.CL].

- ↑ Chung, Junyoung; Gulcehre, Caglar; Cho, KyungHyun; Bengio, Yoshua (2014). "Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modeling". arXiv:1412.3555 [cs.NE].

- ↑ "Recurrent Neural Network Tutorial, Part 4 – Implementing a GRU/LSTM RNN with Python and Theano – WildML". Wildml.com. Retrieved May 18, 2016.

- ↑ Dey, Rahul; Salem, Fathi M. (2017-01-20). "Gate-Variants of Gated Recurrent Unit (GRU) Neural Networks". arXiv:1701.05923 [cs.NE].

- ↑ Heck, Joel; Salem, Fathi M. (2017-01-12). "Simplified Minimal Gated Unit Variations for Recurrent Neural Networks". arXiv:1701.03452 [cs.NE].