Normal mapping

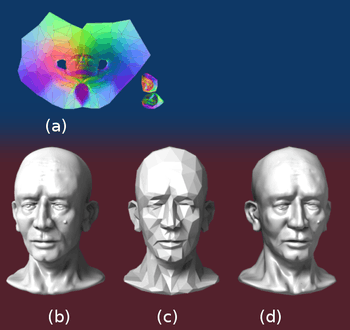

In 3D computer graphics, normal mapping, or Dot3 bump mapping, is a technique used for faking the lighting of bumps and dents – an implementation of bump mapping. It is used to add details without using more polygons. A common use of this technique is to greatly enhance the appearance and details of a low polygon model by generating a normal map from a high polygon model or height map.

Normal maps are commonly stored as regular RGB images where the RGB components correspond to the X, Y, and Z coordinates, respectively, of the surface normal.

History

In 1978 James Blinn described how the normals of a surface could be perturbed to make geometrically flat faces have a detailed appearance. [1] The idea of taking geometric details from a high polygon model was introduced in "Fitting Smooth Surfaces to Dense Polygon Meshes" by Krishnamurthy and Levoy, Proc. SIGGRAPH 1996,[2] where this approach was used for creating displacement maps over nurbs. In 1998, two papers were presented with key ideas for transferring details with normal maps from high to low polygon meshes: "Appearance Preserving Simplification", by Cohen et al. SIGGRAPH 1998,[3] and "A general method for preserving attribute values on simplified meshes" by Cignoni et al. IEEE Visualization '98.[4] The former introduced the idea of storing surface normals directly in a texture, rather than displacements, though it required the low-detail model to be generated by a particular constrained simplification algorithm. The latter presented a simpler approach that decouples the high and low polygonal mesh and allows the recreation of any attributes of the high-detail model (color, texture coordinates, displacements, etc.) in a way that is not dependent on how the low-detail model was created. The combination of storing normals in a texture, with the more general creation process is still used by most currently available tools.

How it works

To calculate the Lambertian (diffuse) lighting of a surface, the unit vector from the shading point to the light source is dotted with the unit vector normal to that surface, and the result is the intensity of the light on that surface. Imagine a polygonal model of a sphere - you can only approximate the shape of the surface. By using a 3-channel bitmap textured across the model, more detailed normal vector information can be encoded. Each channel in the bitmap corresponds to a spatial dimension (X, Y and Z). This adds much more detail to the surface of a model, especially in conjunction with advanced lighting techniques.

Spaces

Spatial dimensions differ depending on the space in which the normal map was encoded. A straightforward implementation encodes normals in object-space, so that red, green, and blue components correspond directly with X, Y, and Z coordinates. In object-space the coordinate system is constant.

However object-space normal maps cannot be easily reused on multiple models, as the orientation of the surfaces differ. Since color texture maps can be reused freely, and normal maps tend to correspond with a particular texture map, it is desirable for artists that normal maps have the same property.

Normal map reuse is made possible by encoding maps in tangent space. The tangent space is a vector space which is tangent to the model's surface. The coordinate system varies smoothly (based on the derivatives of position with respect to texture coordinates) across the surface.

Tangent space normal maps can be identified by their dominant purple color, corresponding to a vector facing directly out from the surface. See below.

Calculating tangent space

In order to find the perturbation in the normal the tangent space must be correctly calculated.[5] Most often the normal is perturbed in a fragment shader after applying the model and view matrices. Typically the geometry provides a normal and tangent. The tangent is part of the tangent plane and can be transformed simply with the linear part of the matrix (the upper 3x3). However, the normal needs to be transformed by the inverse transpose. Most applications will want cotangent to match the transformed geometry (and associated UVs). So instead of enforcing the cotangent to be perpendicular to the tangent, it is generally preferable to transform the cotangent just like the tangent. Let t be tangent, b be cotangent, n be normal, M3x3 be the linear part of model matrix, and V3x3 be the linear part of the view matrix.

Normal mapping in video games

Interactive normal map rendering was originally only possible on PixelFlow, a parallel rendering machine built at the University of North Carolina at Chapel Hill. It was later possible to perform normal mapping on high-end SGI workstations using multi-pass rendering and framebuffer operations[6] or on low end PC hardware with some tricks using paletted textures. However, with the advent of shaders in personal computers and game consoles, normal mapping became widely used in commercial video games starting in late 2003. Normal mapping's popularity for real-time rendering is due to its good quality to processing requirements ratio versus other methods of producing similar effects. Much of this efficiency is made possible by distance-indexed detail scaling, a technique which selectively decreases the detail of the normal map of a given texture (cf. mipmapping), meaning that more distant surfaces require less complex lighting simulation. Many authoring pipelines use high resolution models baked into low/medium resolution in game models augmented with normal maps.

Basic normal mapping can be implemented in any hardware that supports palettized textures. The first game console to have specialized normal mapping hardware was the Sega Dreamcast. However, Microsoft's Xbox was the first console to widely use the effect in retail games. Out of the sixth generation consoles, only the PlayStation 2's GPU lacks built-in normal mapping support, though it can be simulated using the PlayStation 2 hardware's vector units. Games for the Xbox 360 and the PlayStation 3 rely heavily on normal mapping and were the first game console generation to make use of parallax mapping. The Nintendo 3DS has been shown to support normal mapping, as demonstrated by Resident Evil: Revelations and Metal Gear Solid: Snake Eater.

See also

- Reflection (physics)

- Ambient occlusion

- Depth map

- Baking (computer graphics)

- Tessellation (computer graphics)

References

- Blinn. Simulation of Wrinkled Surfaces, Siggraph 1978

- Krishnamurthy and Levoy, Fitting Smooth Surfaces to Dense Polygon Meshes, SIGGRAPH 1996

- Cohen et al., Appearance-Preserving Simplification, SIGGRAPH 1998 (PDF)

- Cignoni et al., A general method for preserving attribute values on simplified meshes, IEEE Visualization 1998 (PDF)

- Mikkelsen, Simulation of Wrinkled Surfaces Revisited, 2008 (PDF)

- Heidrich and Seidel, Realistic, Hardware-accelerated Shading and Lighting Archived 2005-01-29 at the Wayback Machine, SIGGRAPH 1999 (PDF)

External links

| Wikimedia Commons has media related to Normal mapping. |

- Normal Map Tutorial Per-pixel logic behind Dot3 Normal Mapping

- NormalMap-Online Free Generator inside Browser

- Normal Mapping on sunandblackcat.com

- Blender Normal Mapping

- Normal Mapping with paletted textures using old OpenGL extensions.

- Normal Map Photography Creating normal maps manually by layering digital photographs

- Normal Mapping Explained

- Simple Normal Mapper Open Source normal map generator